Text2HD v.1

by asterixcool

Purpose: Upscale text in very low quality to normal quality.

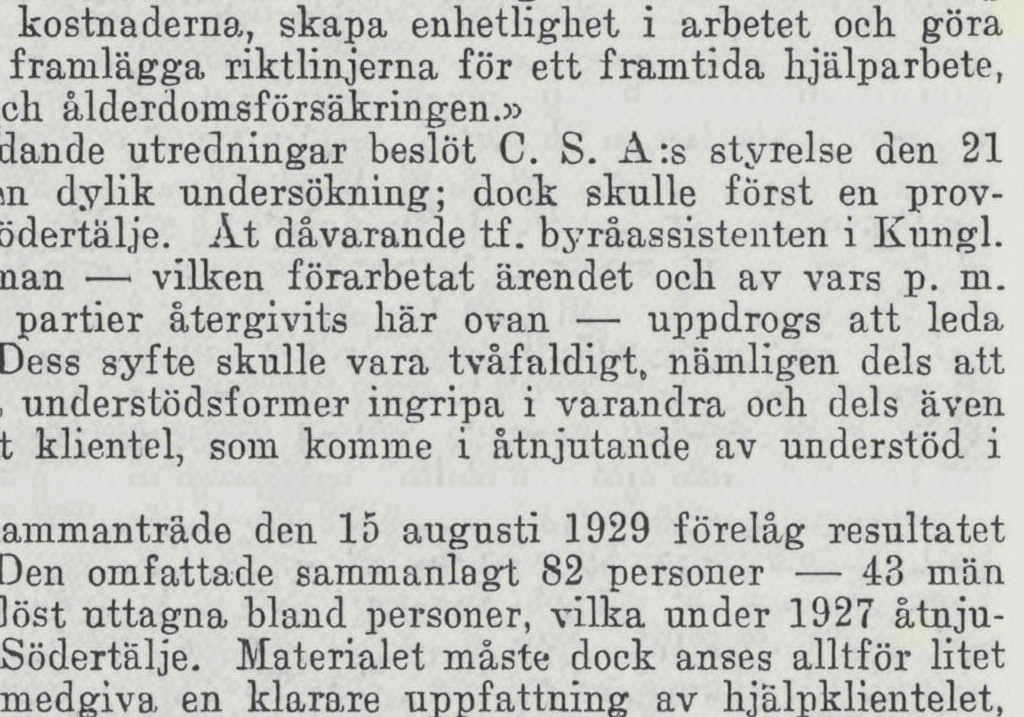

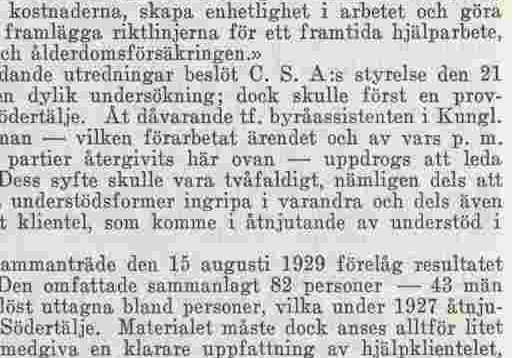

The upscale model is specifically designed to enhance lower-quality text images, improving their clarity and readability by upscaling them by 2x. It excels at processing moderately sized text, effectively transforming it into high-quality, legible scans. However, the model may encounter challenges when dealing with very small text, as its performance is optimized for text of a certain minimum size. For best results, input images should contain text that is not excessively small.

| Architecture | RealPLKSR |

|---|---|

| Scale | 2x |

| Color Mode | |

| License | CC-BY-SA-4.0 Private use Commercial use Distribution Modifications Credit required Same License State Changes No Liability & Warranty |

| Date | 2024-08-27 |

| Dataset | Scanned books and text |

| Dataset size | 8000 |

| Training iterations | 168000 |

| Training batch size | 8 |